Introduction to Artificial Intelligence

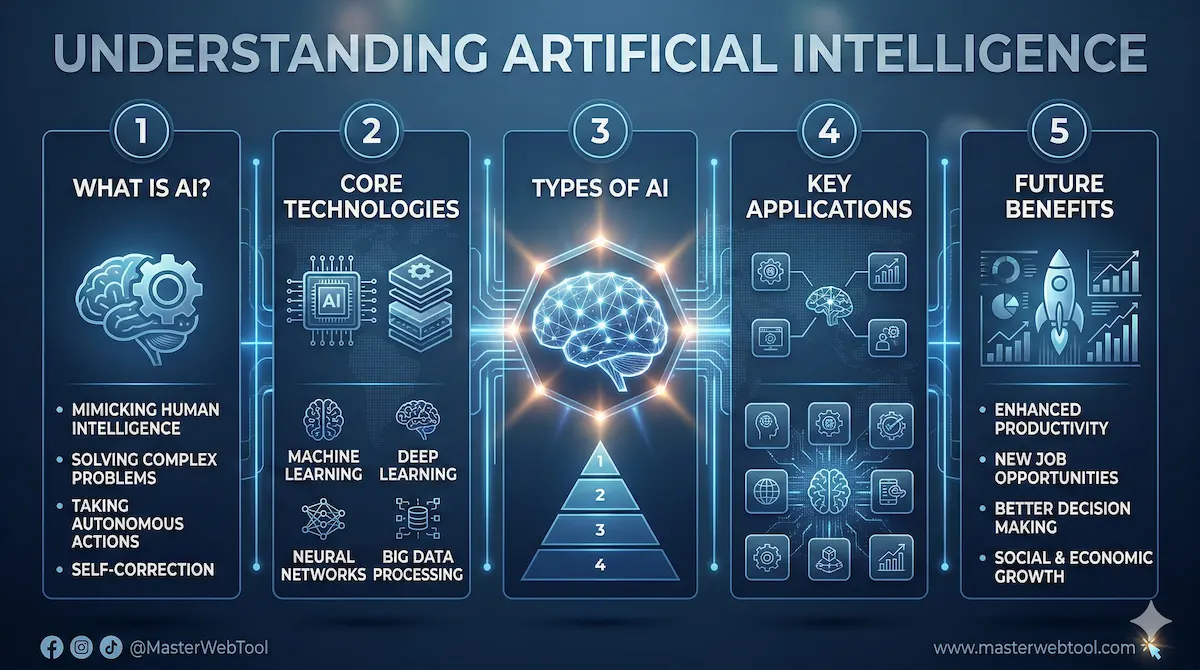

Artificial Intelligence (AI) is a field of computer science that aims to create machines capable of performing tasks that typically require human intelligence. These tasks encompass various capabilities such as learning, reasoning, problem-solving, understanding natural language, perception, and even decision-making. The concept of AI has evolved significantly over the past several decades and has transitioned from theoretical frameworks into concrete applications that permeate numerous industries.

The term “artificial intelligence” was coined in 1956 during a conference at Dartmouth College, where early pioneers of the field gathered to discuss the potential of creating machines that could simulate human cognitive functions. Over the years, AI has seen numerous transformations, from rule-based systems in the early days to modern approaches that leverage vast amounts of data and advanced algorithms. These advancements have led to the development of subfields within AI, including machine learning, natural language processing, computer vision, and robotics.

Today, the relevance of AI cannot be overstated. Businesses and organizations across various sectors increasingly rely on artificial intelligence to enhance efficiency, improve customer experiences, and drive innovation. In healthcare, AI is being utilized for diagnostic tools and personalized medicine; in finance, it aids in risk assessment and fraud detection; and in transportation, autonomous vehicles are being developed using AI technologies. Additionally, AI is reshaping the way individuals interact with technology in their daily lives, from virtual assistants to recommendation systems.

As we move forward, understanding artificial intelligence becomes essential, not only for professionals in the tech industry but also for consumers who navigate a world increasingly influenced by these intelligent machines. AI poses both opportunities and challenges, raising important questions about ethics, privacy, and the future of work. Thus, studying this field is crucial for anyone looking to grasp the dynamics of modern technology and its role in shaping our future.

The Different Types of Artificial Intelligence

Artificial Intelligence (AI) is categorized into several types based on its capabilities and functionality. Understanding these classifications helps delineate the scope and scale of AI applications in various sectors. The primary types of AI are narrow AI, general AI, and superintelligent AI, each exhibiting distinct characteristics and potential uses.

Narrow AI, also referred to as weak AI, is designed to perform a specific task or a limited range of tasks. Examples of narrow AI include voice assistants like Siri and Alexa, recommendation algorithms on platforms like Netflix and Amazon, and even advanced chess-playing programs. This type of AI excels in defined roles but lacks the broader understanding or reasoning capabilities typical of human intelligence.

General AI, conversely, represents a more advanced form of artificial intelligence, with theoretical abilities comparable to human cognition. General AI would possess the capacity to understand, learn, and apply knowledge across a wide range of tasks, much like a human can. Currently, no true general AI exists; it remains an aspirational goal within the field of artificial intelligence. Researchers are exploring various approaches, including machine learning and neural networks, to potentially reach this level of intelligence.

Superintelligent AI is the most speculative and controversial category, denoting a level of intelligence that transcends human capabilities across virtually all aspects. Superintelligent systems could outperform the best human minds in science, creativity, and social skills. While this type of AI remains theoretical and is often the subject of ethical discussions, ongoing advancements in AI technology continue to fuel debates about its societal implications and potential risks.

In summary, distinguishing between narrow AI, general AI, and superintelligent AI elucidates the evolving nature of artificial intelligence and its capabilities, influencing how AI is developed and integrated into various applications across industries.

How AI Works: The Fundamentals

Artificial Intelligence (AI) operates on a series of sophisticated components, key among these being algorithms, machine learning, neural networks, and deep learning. Each element plays a critical role in the functionality and decision-making processes of AI systems.

At its core, an algorithm is a set of rules or instructions designed to perform a specific task or solve a problem. In AI, algorithms analyze data and determine how to perform tasks such as classification, regression, or clustering. These algorithms can learn from the data provided, improving their accuracy over time, which leads us to the concept of machine learning (ML). Machine learning is a subset of AI that leverages data to enable systems to learn autonomously. By feeding ML algorithms large datasets, they can identify patterns and make predictions without explicit programming.

Neural networks, inspired by the human brain’s interconnected neuron structure, are another foundational component of AI. They consist of nodes (neurons) and connections (synapses) that process input data. Neural networks excel in recognizing patterns and are particularly effective in tasks such as image recognition and natural language processing. Deep learning is a specialization within neural networks that utilizes multiple layers of processing to enhance learning capabilities. This complex architecture allows deep learning models to process vast amounts of data with high levels of accuracy.

Together, these fundamental elements form the backbone of AI technology. By using algorithms to process information, coupled with machine learning techniques and advanced neural networks, AI systems can perform complex tasks, make decisions, and improve their performance continuously. Understanding these components is essential to grasping how AI operates and how it is revolutionizing various industries worldwide.

Key Components of Machine Learning

Machine learning, a crucial subset of artificial intelligence (AI), encompasses various processes that enable systems to learn from data. At its core, machine learning relies heavily on training data, which serves as the foundation for developing algorithms. Without high-quality training data, the potential of machine learning models is significantly hindered, thereby affecting their accuracy and efficacy.

Training data consists of input data combined with the desired outputs, which allows machine learning models to identify patterns and make predictions. The success of these models is largely contingent on the quality of data; hence, data preprocessing and cleansing are vital steps to ensure that the algorithms can effectively learn from it. Additionally, the representation of data, whether structured or unstructured, plays an influential role in the outcomes of machine learning applications.

Once the training data is established, the next crucial component is the model itself. A machine learning model is a mathematical representation that processes input to produce predictions or classifications. Various algorithms are applied to develop these models, including decision trees, support vector machines, and neural networks. The choice of model greatly impacts the performance of machine learning tasks, making it critical for practitioners to select the appropriate approach based on the specific use case.

Moreover, machine learning can be categorized into three primary types: supervised learning, unsupervised learning, and reinforcement learning. Supervised learning involves training a model with labeled data, allowing it to learn specific input-output mappings. In contrast, unsupervised learning works with unlabeled data, enabling the model to identify inherent structures or patterns within the data. Lastly, reinforcement learning focuses on training models through a system of rewards and penalties based on their actions and decisions in a dynamic environment. These diverse approaches highlight the versatility and extensive application potential present within the realm of machine learning.

Neural Networks: The Brain Behind AI

Neural networks represent a crucial component of artificial intelligence, mimicking the way the human brain processes information. At the core of a neural network is a collection of interconnected nodes known as neurons. Each neuron operates similarly to its biological counterpart, receiving input, processing it, and producing output. This structure allows neural networks to learn from data and make predictive models, making them essential for various AI applications.

A neural network typically consists of three main layers: the input layer, hidden layers, and the output layer. The input layer receives raw data, which is then passed to hidden layers that perform extensive processing. Each hidden layer is comprised of multiple neurons that transform and pass information to the next stage. The depth of a neural network, characterized by the number of hidden layers, can significantly enhance its ability to recognize complex patterns and solve difficult problems.

The connections between neurons, often termed weights, determine the strength of the signal transmitted from one neuron to another. These weights are adjusted during a training phase to minimize errors in the output. The process involves techniques such as backpropagation, where the network learns from its mistakes by updating weights based on the difference between expected and actual outputs. This iterative adjustment is essential for accurate learning and effective functionality, akin to how humans learn from experience.

As a result, neural networks have propelled advancements in fields such as image recognition, natural language processing, and even autonomous systems. By leveraging a structure reminiscent of the human brain, neural networks facilitate the development of sophisticated AI systems capable of understanding and interpreting complex information. This interplay between structure and function underscores the versatility and power of neural networks in pushing the boundaries of artificial intelligence.

Natural Language Processing and AI

Natural Language Processing (NLP) is a specialized area of artificial intelligence that focuses on the interaction between computers and human language. It encompasses the development of algorithms and models that allow machines to analyze, interpret, and generate human language in a manner that is both meaningful and beneficial. Through NLP, AI systems are equipped to understand context, sentiment, and the subtleties inherent in human communication.

One of the core functionalities of NLP is text analysis, which involves processes such as tokenization, part-of-speech tagging, and syntactic parsing. These processes help AI systems break down text data into manageable components, enabling them to comprehend language structures and extract actionable insights. Additionally, NLP employs machine learning techniques to enhance the understanding of linguistic nuances, thereby improving the accuracy of AI-generated outputs.

With advancements in NLP, various applications have emerged, including chatbots and language translation tools. Chatbots utilize NLP to interpret user queries and provide relevant responses, mimicking human conversation. This technology is prevalent in customer service sectors, where organizations leverage chatbots to address inquiries efficiently and enhance user experience. On the other hand, language translation tools use NLP to convert text from one language to another, facilitating global communication by bridging linguistic barriers. These tools, powered by sophisticated AI algorithms, have significantly improved over the years, enabling near-instant translations that account for idiomatic expressions and contextual variations.

In conclusion, the intersection of AI and linguistics through natural language processing illustrates the transformative potential of artificial intelligence in interpreting and generating human-like language. As NLP continues to evolve, its applications in chatbots and translation services will likely expand, further enriching our interaction with technology and enhancing communication worldwide.

7. Ethical Considerations in AI Development

The development of artificial intelligence (AI) presents numerous ethical considerations that must be thoughtfully addressed to ensure responsible use of this technology. One primary concern is the potential for biases embedded in AI algorithms, which can occur when the data used to train these systems reflects historical prejudices or societal inequalities. Such biases can result in unfair outcomes, especially in sensitive areas like hiring, law enforcement, and lending. Therefore, it is imperative for developers to actively seek diverse datasets and implement rigorous testing to minimize these biases, allowing AI to serve a broader and fairer purpose.

Privacy concerns also arise with the implementation of AI technologies, particularly regarding the data they collect and process. As AI systems increasingly rely on vast amounts of personal data to improve their functionalities, there is a heightened risk of infringing on individual privacy rights. Organizations developing AI must adopt strict data governance practices, ensuring transparency about data usage and empowering individuals to control their information. By adhering to ethical data practices, developers can foster public trust in AI technologies.

Furthermore, the potential for job displacement due to AI automation poses significant ethical questions. While AI can enhance productivity and efficiency, it also threatens to displace workers in various sectors, raising concerns about economic inequality and job security. It is essential for stakeholders to consider strategies that support workforce transition through retraining programs and educational initiatives, helping individuals adapt to a changing job landscape.

In conclusion, the ethical considerations in AI development are multifaceted, encompassing algorithmic biases, privacy issues, and the social impact of automation. By prioritizing responsible practices and cultivating a collaborative approach among technologists, policymakers, and affected communities, we can navigate the complexities of AI and contribute to its beneficial integration into society.

Real-World Applications of AI

Artificial Intelligence (AI) has permeated numerous sectors, showcasing its potential to revolutionize how industries operate and deliver services. In healthcare, AI-powered systems are utilized for predictive analytics, which allows for early diagnosis and personalized treatment plans. Machine learning algorithms analyze vast datasets from medical records, significantly enhancing accuracy in disease detection while optimizing patient outcomes.

In the finance sector, AI is employed for fraud detection and risk assessment. By analyzing transaction patterns and customer behavior, AI systems can identify anomalies that might indicate fraudulent activities, thus safeguarding financial institutions and customers alike. Furthermore, algorithmic trading utilizes AI to process information at lightning speed, enabling swift investment decisions while maximizing market returns.

The transportation industry has also seen a profound impact from AI, particularly in the development of autonomous vehicles. Self-driving technology harnesses AI’s capabilities to navigate and make real-time decisions, potentially reducing accidents and improving traffic efficiency. Moreover, AI assists in logistics and supply chain management by optimizing routes and forecasting demand more accurately, thereby reducing costs and enhancing service delivery.

In entertainment, AI algorithms curate content recommendations based on user preferences, thereby enhancing user experience. Streaming services such as Netflix and Spotify employ AI to analyze viewer habits, ensuring personalized suggestions that keep users engaged. Additionally, AI-driven tools can be found in video game design, offering adaptive AI that modifies gameplay according to a player’s skill level, providing a tailored experience.

Overall, the applications of AI across these sectors are not merely supplementary. They represent a significant shift towards more efficient operations and enhanced service delivery, emphasizing the technology’s crucial role in shaping the future of various industries.

The Future of Artificial Intelligence

The landscape of artificial intelligence (AI) is rapidly evolving, marked by continuous advancements and transformative innovations. Researchers are exploring various frontiers within the realm of AI, including natural language processing, machine learning, and neural networks. As these fields progress, the potential for development becomes enthralling, particularly regarding how machines can autonomously learn and adapt over time.

One of the most promising directions for future AI is the further integration of AI into various sectors such as healthcare, transportation, and education. In healthcare, for instance, AI technologies are being developed to assist in diagnosing diseases, recommending treatment plans, and even predicting outbreaks. The transportation sector is also seeing revolutionary changes through autonomous vehicles equipped with AI systems capable of navigating complex environments safely and efficiently.

Another area of interest is the ethical implications surrounding AI technology. As machines become increasingly autonomous, questions regarding accountability, privacy, and bias arise. The ongoing development of ethical frameworks and guidelines will be crucial in ensuring that AI serves humanity positively. Moreover, addressing these challenges will require collaboration among technologists, lawmakers, and ethicists to create robust policies that govern the use of AI.

Additionally, the challenge of achieving general artificial intelligence (AGI), which refers to AI systems capable of performing any intellectual task comparable to a human, remains a long-term objective. While current AI can excel in narrow tasks, the road to AGI is fraught with technical hurdles that must be overcome, including improving interpretability, reliability, and adaptability of AI systems.

In conclusion, the future of artificial intelligence holds vast potential and challenges. Continued research and development in this field will likely yield groundbreaking innovations that can benefit society while navigating the complexities and ethical considerations inherent in such advancements.

Frequently Asked Questions (FAQ): Understanding Artificial Intelligence

1. What is Artificial Intelligence (AI) in simple terms? Artificial Intelligence (AI) is a branch of computer science focused on creating smart machines capable of performing tasks that typically require human intelligence. This includes learning from experience, understanding human language, recognizing patterns, and solving complex problems.

2. How does Artificial Intelligence actually work? AI works by combining massive amounts of data with fast processing power and intelligent algorithms. The system ingests this data, analyzes it for patterns and correlations, and then uses those patterns to make decisions or predictions. The more data the AI processes, the smarter and more accurate it becomes.

3. What is the difference between AI, Machine Learning (ML), and Deep Learning?

- Artificial Intelligence (AI): The overarching concept of machines acting intelligently.

- Machine Learning (ML): A specific subset of AI where computers are trained to learn from data and improve over time without being explicitly programmed for every single step.

- Deep Learning: An advanced subfield of ML that uses multi-layered “neural networks” (inspired by the human brain) to process vast amounts of unstructured data, like images and audio.

4. What are the different types of AI? AI is generally divided into three main categories based on capability:

- Narrow AI (Weak AI): AI designed to perform one specific task excellently (e.g., Siri, Google Search, spam filters). This is the only type of AI that exists today.

- General AI (Strong AI): A theoretical form of AI where a machine possesses human-level cognitive abilities and can understand or learn any intellectual task a human can.

- Super AI: A futuristic concept where AI surpasses human intelligence and capability across all fields.

5. What are some everyday examples of AI? You interact with AI multiple times a day. Common examples include:

- Virtual assistants like Alexa and Google Assistant.

- Content recommendation engines on YouTube, Netflix, and Spotify.

- Navigation apps like Google Maps that predict real-time traffic.

- Facial recognition technology on smartphones.

- AI chatbots and automated customer support.

6. Will AI replace human jobs? While AI is exceptionally good at automating repetitive and routine tasks, it is more likely to transform the job market rather than completely take it over. AI acts as a powerful tool to boost human productivity. It will eliminate some mundane jobs but simultaneously create new roles focused on managing, developing, and auditing AI systems.

7. Are there any risks associated with Artificial Intelligence? Yes, like any powerful technology, AI comes with challenges. The main concerns include algorithmic bias (where AI inherits human prejudices from its training data), data privacy issues, and security vulnerabilities. To address this, developers and organizations are increasingly focusing on “Ethical AI” to ensure systems are transparent, fair, and secure.